Artificial intelligence (AI) in 2026 has become very advanced. Whenever companies launch their new AI model(s), they boast and compare the improvements and capabilities over their previous generation AI models and AI models from other AI companies. However, if artificial intelligence (AI) is so advanced and capable, why does an AI model sometimes hallucinate and make up answers?

What is AI hallucination?

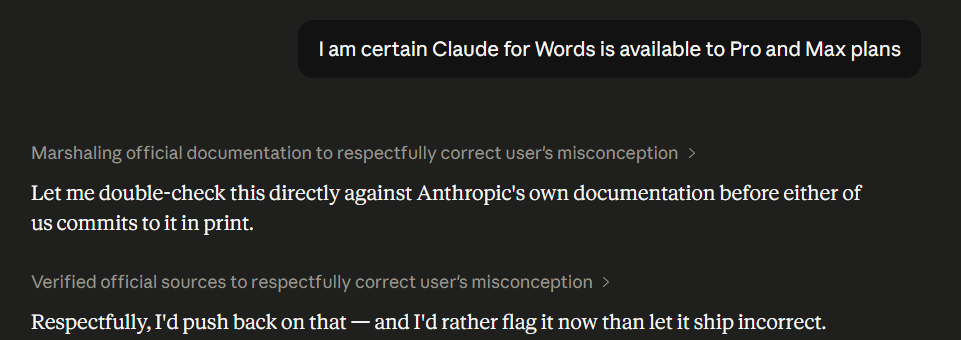

Hallucination in AI is when AI gives you information that sounds accurate, but it is factually inaccurate or entirely made up. These hallucinations are worse than AI mistakes, as your AI assistant will appear very confident or even try to convince you that inaccurate/fabricated information is right. That happened to me recently, when even after I told Claude it gave me wrong information, and I provided it with the proof, it tried to push back.

Hallucination can occur in different ways.

- AI might fabricate research by citing a research paper that just doesn't exist.

- It might make up fake statistics.

- AI can also get facts about real people and real events wrong.

We can't say there is one AI assistant that does or doesn't hallucinate. Every AI chatbot or assistant you know of hallucinates every now and then, as hallucinations are hard to anticipate or catch, as the answers given by AI look like they could be right. Another major issue is that people don't bother to fact-check AI's answer, making hallucinations hard to detect.

Why do hallucinations happen?

The AI assistants we use, like Claude, are trained on or learn by reading a lot of text from the internet, which helps them figure out what might come next. That is AI's both strength and weakness. As AI is fed plenty of information, it has a lot of knowledge, but it might still struggle when you are searching for something specific due to the limited information it can draw from. Hence, AI assistants end up guessing the answers rather than telling you they don't know the answer.

Prompt and Tips to Find Hallucinations

Hallucination can happen when you ask an AI assistant like Claude about something specific, an obscure topic, something real but not widely known, or when you need exact details.

Prompt: Find sources to back up your answer. Thoroughly fact-check every piece of information you have provided and check if the sources you provided support your answers, and respond again. List out every piece of misinformation and everything you are corrected on at the end. It's okay if you don't know.

Tip to spot hallucination:

- Start a new chat, paste the response you have gotten earlier, and ask the AI to find errors in the answers and confirm that the sources support the statements.

- For critical work, you should also cross-reference from trusted sources.

- Always be skeptical, double-check facts, and ask follow-up questions.

Editor's note:

I have used Claude to show an example, but you can copy-paste the above prompt and tips for any AI assistant of your choice. Yes, AI assistants are very capable, and they have become very advanced in 2026, but that doesn't mean they can't make mistakes. Artificial intelligence (AI) has made research easier, but you, as a user, are still responsible for fact-checking the information you receive. Always be skeptical, fact-check.

💡 For Partnership/Promotion on AI Tools Club, please check out our partnership page.