The world's most important software has always had hidden vulnerabilities, sometimes for years, sometimes for decades. However, finding them isn't an easy task; it requires a rare mix of deep expertise and patience. Now, artificial intelligence (AI) is changing that, and not for the better. Anthropic has just introduced Project Glasswing, an urgent, industry-wide initiative to deploy frontier AI capabilities for cyber defense before attackers get there first.

What Is Project Glasswing?

Project Glasswing brings together Amazon Web Services (AWS), Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks to secure the world's most critical software.

The initiative is powered by a new, unreleased AI model called Claude Mythos Preview, named after the Ancient Greek word for "utterance" or "narrative," the system of stories through which civilizations made sense of the world.

The name of the project has two meanings.

- First, the glasswing butterfly has transparent wings that help it blend into its surroundings, just as this project addresses vulnerabilities.

- Second, these clear wings protect the butterfly, just as Anthropic promotes transparency in its work.

Claude Mythos Preview is a general-purpose, unreleased frontier model that reveals a stark fact that AI models have reached a level of coding capability that allows them to surpass all but the most skilled humans at finding and exploiting software vulnerabilities.

Why Now? The Urgency Behind the Initiative

The software that all of us rely on every day, which is responsible for running banking systems, storing medical records, connecting logistics networks, and keeping power grids functioning, has always contained bugs. Many are minor, but some are serious security flaws that, if found, could allow cyberattackers to hijack systems, disrupt operations, or steal data.

The problem is quickly escalating. On the global stage, state-sponsored attacks have threatened to compromise the infrastructure that underpins both civilian life and military readiness. The current global financial costs of cybercrime are challenging to estimate, but might be around $500B every year.

With the latest frontier AI models, the cost, effort, and level of expertise required to find and exploit software vulnerabilities have all dropped dramatically. What makes Mythos Preview a particularly significant moment is that its security capabilities were not deliberately trained. They occurred as a downstream consequence of general improvements in code, reasoning, and autonomy.

The same improvements that make the model substantially more effective at patching vulnerabilities also make it substantially more effective at exploiting them.

What Claude Mythos Preview Has Already Found

In the past few weeks, Anthropic used Claude Mythos Preview to find thousands of zero-day vulnerabilities, which are flaws that software developers did not know about. Most of these were critical across every major operating system, web browser, and other important software. It was able to identify nearly all of these vulnerabilities and develop many related exploits, entirely autonomously, without any human guidance. Three specific findings stand out:

- A 27-year-old OpenBSD vulnerability: Mythos Preview found a flaw in an operating system with a reputation as one of the most security-hardened in the world, used to run firewalls and other critical infrastructure. The vulnerability allowed an attacker to remotely crash any machine running the operating system just by connecting to it.

- A 16-year-old FFmpeg bug: The model found a flaw in a line of code that automated testing tools had hit five million times without ever catching the problem. FFmpeg is used by countless applications to encode and decode video.

- A Linux kernel privilege escalation chain: The model autonomously found and chained together several vulnerabilities in the Linux kernel (the software that runs most of the world's servers) to allow an attacker to escalate from ordinary user access to complete control of the machine.

All three vulnerabilities have been reported to the maintainers of the relevant software and have now been patched. They represent only a fraction of what Mythos Preview has found. Over 99% of the vulnerabilities found have not yet been patched, so Anthropic didn't disclose details about them at this time, as that would be irresponsible.

AdCreative.ai: An AI-powered platform that automates the creation of high-performing ad creatives for social media and display campaigns.

The Model's Performance in Numbers

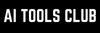

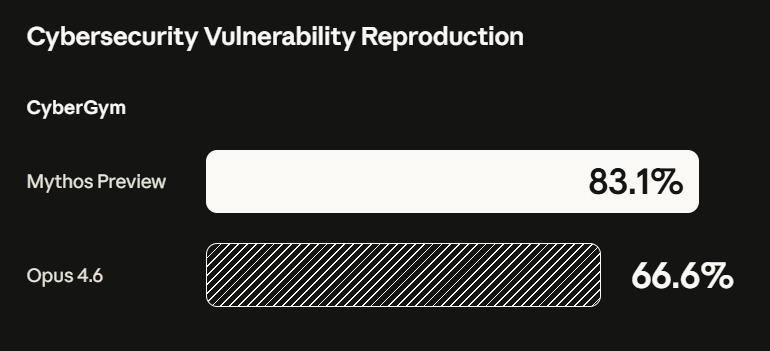

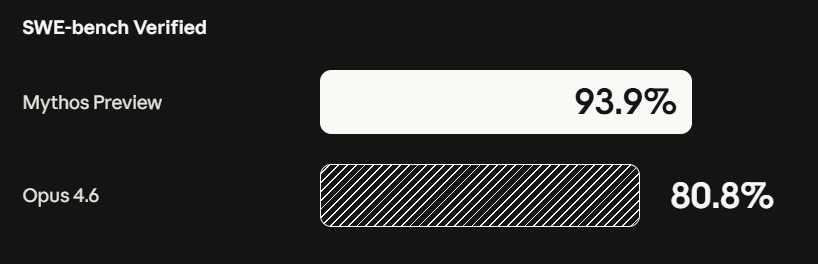

Benchmarks make the capability gap between Mythos Preview and its predecessors concrete:

- On CyberGym (cybersecurity vulnerability reproduction), Mythos Preview scored 83.1% versus 66.6% for Claude Opus 4.6.

- On SWE-bench Verified (agentic coding), Mythos Preview reached 93.9% compared to 80.8% for Opus 4.6.

- On Humanity's Last Exam with tools, Mythos Preview scored 64.7% versus 53.1% for Opus 4.6.

The autonomous nature of these findings is a key point. Engineers at Anthropic, with no formal security training, have asked Mythos Preview to find remote code execution vulnerabilities overnight, and woken up the following morning to a complete, working exploit.

How Project Glasswing Will Operate

Anthropic is committing up to $100M in usage credits for Mythos Preview across its partners and additional participants. The company has also donated $2.5M to Alpha-Omega and OpenSSF through the Linux Foundation, and $1.5M to the Apache Software Foundation, to allow maintainers of open-source software to respond to this changing industry.

Apart from the 12 named launch partners, Anthropic has extended access to a group of over 40 additional organizations that build or maintain critical software infrastructure, allowing them to use the model to scan and secure both first-party and open-source systems.

The work is expected to focus on:

- Local vulnerability detection

- Black-box testing of binaries

- Securing endpoints

- Penetration testing of systems

Within 90 days, Anthropic will publicly report what it has learned, as well as the vulnerabilities it has fixed and the improvements it has made that can be disclosed. In the long term, the company plans to collaborate with leading security organizations to develop practical recommendations on vulnerability disclosure processes, patching automation, open-source and supply-chain security, and secure-by-design software development practices.

The Dual-Edged Reality

Anthropic is honest about the challenge in their recent announcement. Most security tooling has historically benefited defenders more than attackers. Anthropic believes the same will eventually hold true for powerful language models, but the transitional period may be rough. By releasing this model initially to a limited group of critical industry partners and open source developers, the aim is to allow defenders to start securing the most important systems before models with similar capabilities become broadly available.

Anthropic does not plan to make Claude Mythos Preview generally available. Its eventual goal is to allow users to safely deploy Mythos-class models at scale for cybersecurity purposes, but also for the many other benefits that such highly capable models will bring. To do so, progress is needed in developing cybersecurity safeguards that detect and block the model's most dangerous outputs. Anthropic plans to launch new safeguards with an upcoming Claude Opus model, allowing it to improve and refine them without the same level of risk as Mythos Preview.

In Conclusion:

Project Glasswing is not just a product announcement; it shows how AI capabilities have crossed a threshold that demands a fundamental rethinking of how software security works at every level. Anthropic recommends that organizations use currently available frontier models to strengthen defenses now, shorten patch cycles, review vulnerability disclosure policies, and automate technical incident response pipelines. The volume of security work is going to increase significantly, and everything that requires manual triage is likely to benefit from the use of a scaled model.

The race between defenders and attackers has always been asymmetric. Project Glasswing is one of the most ambitious attempts yet to tip that balance in the right direction while there is still time to do so.

💡 For Partnership/Promotion on AI Tools Club, please check out our partnership page.