For years, building and adding AI into a product meant using the same old playbook of picking one of the biggest frontier models, wrapping it in a prompt, and shipping, then spending months adapting your workflows around what the AI model can do and quietly accepting what it cannot. That compromise era is ending. A model trained on everything is optimized for nothing, and the tasks that matter most, where accuracy impacts revenue, risk, and reputation, aren't average. That is where Oumi comes in as a platform designed to let any team build purpose-built AI models in hours, not months.

What Is Oumi?

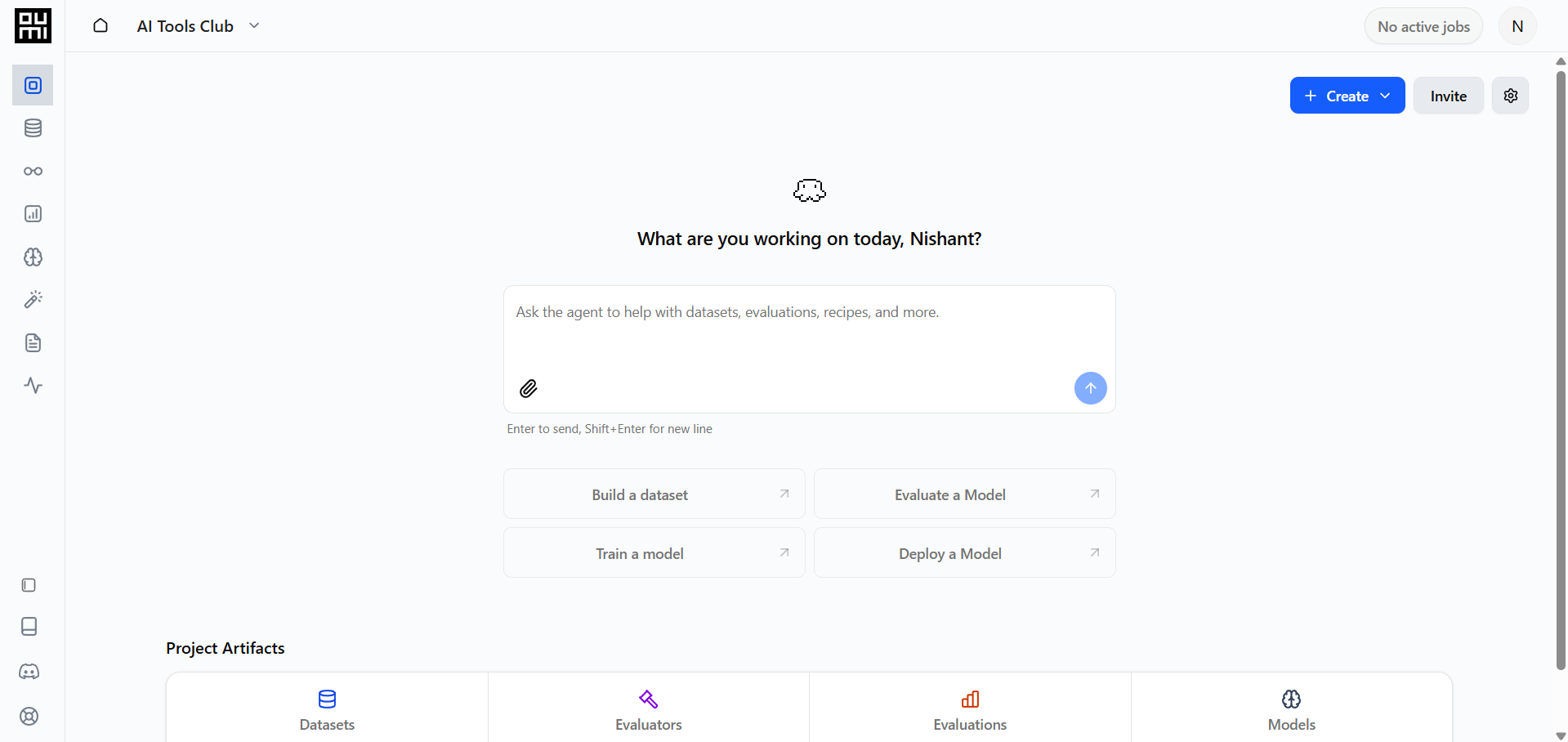

Oumi is an AI-native Custom Model Development Platform that automates the entire model development lifecycle, including data synthesis, evaluation, training, and deployment. Oumi is different from the generic LLM APIs that you rent access to; Oumi lets you own the model weights, run them anywhere, and continuously improve them on your terms.

Oumi is backed by researchers at 14+ leading institutions, including Stanford, MIT, Berkeley, and Princeton, and was built by the team behind Google's PaLM/Gemini and state-of-the-art SLMs at Apple, Microsoft, and Cohere. It launched as an open-source project and has since gotten over 9,000 GitHub stars, with enterprise adoption across healthcare, financial services, insurance, gaming, and manufacturing.

How Oumi Works: Four Steps to Production

Oumi breaks the custom model lifecycle into a clean, automated loop anyone can follow:

- Evaluate: Describe your task, and Oumi can automatically generate complete test sets, recommended metrics, and ready-to-run evaluators with full visibility into results to help you decide where to focus next.

- Synthesize: Once weaknesses are found, Oumi will automatically synthesize high-quality training examples targeting those failure modes, removing the need for manual curation or labeling. You review before anything is used.

- Train: Oumi supports full fine-tuning, parameter-efficient fine-tuning, and on-policy distillation, then automatically re-evaluates results so you can measure improvement.

- Deploy: You can ship your AI model with built-in lightweight monitoring that can track performance over time and feed insights back into the evaluation cycle.

Why Custom Beats Generic: The Real Numbers

The case for Oumi isn't philosophical; it's economic and operational:

- 50% higher accuracy on task-specific benchmarks vs. frontier models.

- 10× lower cost to run and custom 3–7B models beat GPT-5.4 accuracy on specific tasks and cost 10× less to operate.

- 10× lower latency, which can be critical for agentic workflows where multiple model calls compound delays.

- 100× faster development, as what used to consume weeks of manual iteration now runs in hours.

Real-world results back this up. A healthcare provider built 80+ custom models, achieving 20% higher quality and 70% lower cost, permanently replacing Frontier LLM APIs and saving $2.3M annually. A national insurer achieved 100× cost reduction in high-volume claims triage, reducing costs from $10 to $0.10 per classification.

Getting Started with Oumi

You don't need to have a machine learning PhD to start building your own AI model. Non-AI experts can build production-grade models from a single prompt, while AI experts can move faster with full visibility, control, and the ability to intervene at any step.

The Bottom Line

Anyone can build an AI model now, meaning you don't need to rely on generic AI models that are trained on everything and are optimized for nothing. Oumi makes that precision accessible by allowing you to build an AI model for specific work. You can build your own AI model as a solo software developer, a growing startup, or an enterprise running millions of inference calls daily. You no longer need to rent generic intelligence when you can own specialized intelligence.

💡 For Partnership/Promotion on AI Tools Club, please check out our partnership page.