As modern companies grow larger in a short time, the real problem isn't data storage but instead how to understand that massive data. Companies are constantly getting more data; however, there is too little time for human employees to make sense of that data. Near the end of last month, an engineering deep dive from OpenAI, titled "Inside Our In-House Data Agent," offers a rare look at how a modern AI company solves its own data challenges at scale.

This isn't a product launch, but a candid look at how one of the most sophisticated AI labs designed a bespoke, self-learning AI data agent that helped OpenAI's employees go from question to insight in minutes rather than days.

AINews.sh: Stay ahead with the latest AI product releases, in-depth reviews, and news. Compare AI tools, open-source models, and paid platforms.

The Problem: 600 Petabytes and Counting

As for no one's surprise, OpenAI operates at enormous scale, and its internal data platform is staggering, spanning over 600 petabytes of data spread across roughly 70,000 datasets, serving more than 3,500 internal users across Engineering, Product, Research, Finance, Go-To-Market, and Data Science.

At that size, OpenAI's biggest bottleneck is human bandwidth, where analysts were spending significant time on just hunting for the right table, decoding business logic baked into SQL written months ago, and running query after query to validate their results. The institutional knowledge was locked in the heads of a few data experts, creating a fragile single point of failure whenever a team needed answers fast.

The status quo wasn't working for OpenAI. And building more dashboards and the traditional enterprise response weren't going to solve it either.

The Solution: A Conversational AI Teammate

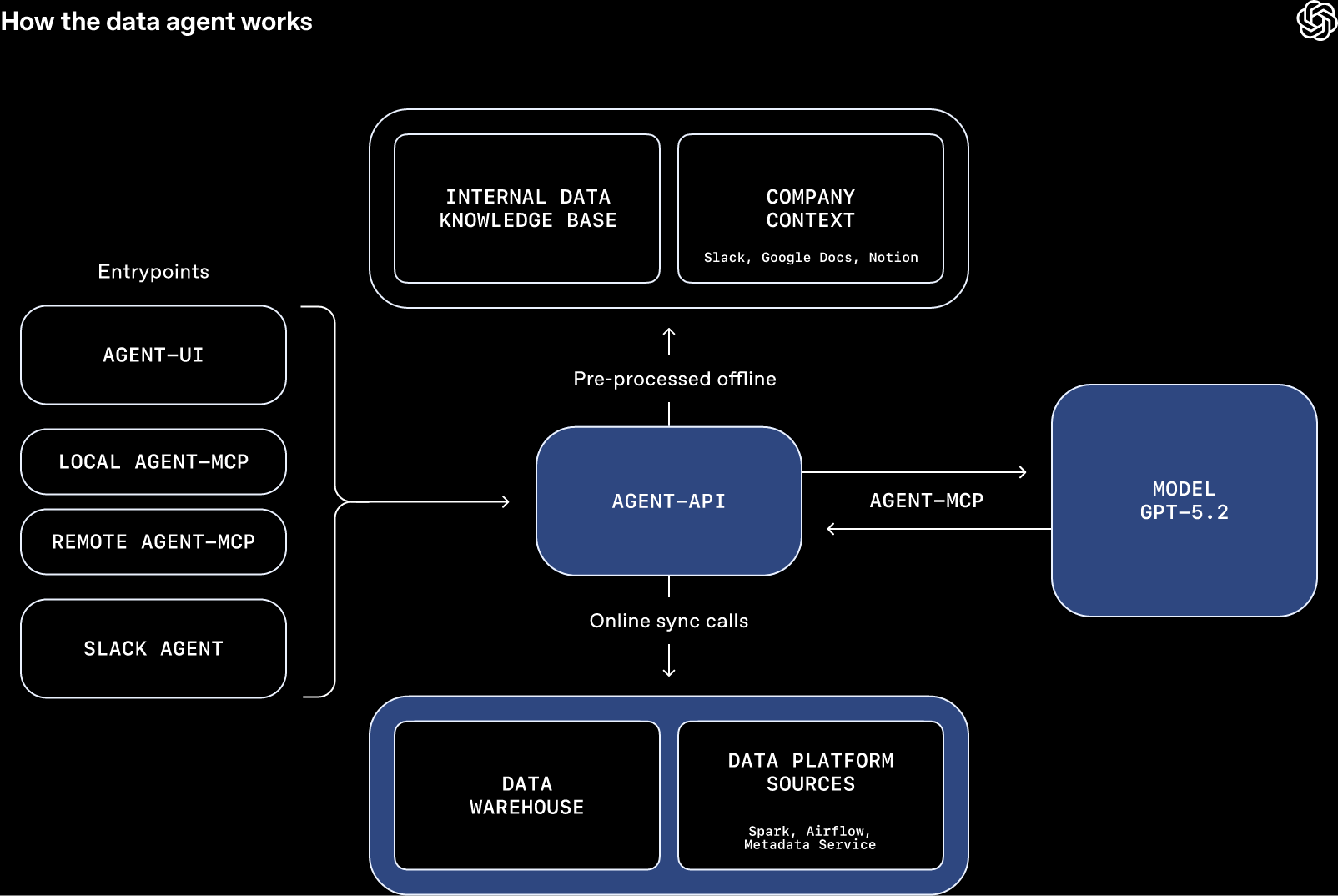

Rather than add another layer of tooling onto an already complex system, OpenAI built a purpose-built internal AI data agent from the ground up. Powered by GPT-5.2, the AI-powered data agent functions less like a query tool and more like a knowledgeable analyst embedded directly in the workflow. It lives where employees already work, like Slack, through a web interface, within developer IDEs, and directly inside OpenAI's internal ChatGPT application via an MCP connector.

OpenAI's AI data agent handles complex, open-ended questions end-to-end. A team member can ask something like, "Which product experiments showed meaningful retention improvements last quarter, and what drove the variation?" And the data agent will locate relevant datasets, write and run queries, validate outputs, and synthesize a human-readable answer, all without hand-holding. What used to require several rounds of back-and-forth with a data engineer can now happen in minutes.

AdCreative.ai: An AI-powered platform that automates the creation of high-performing ad creatives for social media and display campaigns.

Key features and capabilities include:

- Natural language querying: Users ask questions in plain English; the data agent handles all underlying SQL, schema navigation, and data joins automatically.

- End-to-end analysis: From question intake to finding the right table, executing queries, and synthesizing findings, the AI agent manages the full analytical pipeline.

- Multi-platform availability: Accessible via Slack, web UI, IDE integrations, Codex CLI (via MCP), and the internal ChatGPT app, wherever employees actually work.

- Conversational memory: The AI agent preserves context across turns in a conversation, allowing users to naturally refine or follow up on questions without starting over.

- Closed-loop self-correction: If a query fails or returns suspicious results, the AI data agent autonomously diagnoses the error, adjusts its approach, and retries.

What Makes It Trustworthy: Layered Contextual Grounding

The most technically interesting aspect of the agent isn't the AI model powering it but the multi-layered context system that keeps its answers grounded in reality. Raw AI models writing SQL directly is a notoriously unreliable approach; schemas can be misleading, business logic is invisible to the model, and tribal knowledge about how metrics are actually defined rarely makes it into a database column. OpenAI addressed this with several overlapping context layers:

- Table usage metadata: Deep schema understanding, including lineage, usage patterns, and ownership, so the data agent knows not just what a table is, but how it's actually used.

- Human annotations: Domain experts across teams have contributed plain-language annotations that explain the meaning of datasets beyond what the metadata alone can convey.

- Codex-powered code enrichment: The AI data agent uses Codex to read the actual pipeline code that produces tables, extracting semantic meaning from how data is constructed at the source and not just how it's labeled.

- Institutional and organizational context: Business definitions, KPI rules, and organizational nuances (for example, how an active user is defined differently across regions) are baked into the agent's context, preventing the class of subtle errors that plague most automated analytics tools.

The Engineering Lessons Worth Stealing

OpenAI's engineers were candid about the mistakes they made and the counterintuitive lessons they learned building this system:

- Fewer tools, not more: When the team gave the data agent access to its full tool set, overlapping functionality confused it and degraded reliability. However, consolidating and restricting tool calls produced dramatically better results.

- High-level guidance beats rigid instructions: Highly prescriptive prompts backfired because analytical questions vary in shape even when they look similar, and overly detailed instructions pushed the agent down the wrong path. Trusting GPT-5's reasoning to select the right execution path made the agent perform more robustly.

- Code context beats metadata alone: Schema definitions tell you the shape of data, and the code that produces the data tells you what it actually means. The latter proved far more valuable for generating accurate, trustworthy answers.

- Continuous evaluation is non-negotiable: The team uses the Evals API to run automated tests that compare the agent's outputs against verified benchmark answers; essentially, unit-testing its own reasoning to catch regressions before they affect decisions.

Editor's Note: Why This Matters Beyond OpenAI

This internal tool will probably never appear in OpenAI's product catalog, unless they decide to create a generalist version of this AI data agent. However, until then, the architecture, philosophy, and hard-won lessons it represents are the clearest public blueprint yet for what enterprise AI data infrastructure will look like in the next three to five years. The shift from "AI writes SQL" to "AI reasons over data with institutional context and memory" is a meaningful one, and every organization sitting on terabytes of underused data should be paying attention.

The tools OpenAI used to build this agent, including the GPT-5.2 API, Codex, the Embeddings API, and the Evals API, are all publicly available to developers. The blueprint is out there. In the context, however, you'll have to build yourself. Now, the question is whether your organization is building the contextual foundation that will make AI a genuinely useful analytical partner or just another expensive autocomplete for your data warehouse.

💡 For Partnership/Promotion on AI Tools Club, please check out our partnership page.