Google and OpenAI each released a new AI model on the same day, and the timing is telling. Both AI models aim to make daily AI use feel faster, more practical, and genuinely useful for everyday, real-world work; however, they are both optimized for different environments and different kinds of work.

Google unveiled Gemini 3.1 Flash-Lite, its fastest and most cost-efficient model in the Gemini 3 series, focused squarely at developers and enterprise teams running workloads at scale.

OpenAI, on the same day, shipped GPT‑5.3 Instant, a new ChatGPT model, focused on making everyday conversations feel less robotic and more reliable.

Although both AI models share a release date, their design priorities couldn't be more different. Here's what you need to know.

Gemini 3.1 Flash-Lite vs GPT-5.3 Instant (Side-by-Side):

AINews.sh: Stay ahead with the latest AI product releases, in-depth reviews, and news. Compare AI tools, open-source models, and paid platforms.

Gemini 3.1 Flash-Lite by Google:

Google has announced Gemini 3.1 Flash-Lite not as a chat companion, but as infrastructure. It is built for high-volume developers and enterprise workloads where processing costs can make or break a product at scale. The AI model's pricing is $0.25 per million input tokens and $1.50 per million output tokens, making it among the most cost-competitive models in its tier.

But cheap doesn't mean slow or less intelligent. Compared to its predecessor, Gemini 2.5 Flash, the new model delivers meaningful performance gains according to the Artificial Analysis benchmark:

- 2.5× faster Time to First Answer Token, delivering near-instant initial responses critical for real-time applications.

- 45% higher output speed, making it viable for high-frequency pipelines.

- An Elo score of 1432 on the Arena.ai Leaderboard, outperforming other models in its tier.

- 86.9% on GPQA Diamond and 76.8% on MMMU Pro, benchmark scores that even surpass those of larger Gemini models from prior generations, like Gemini 2.5 Flash.

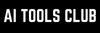

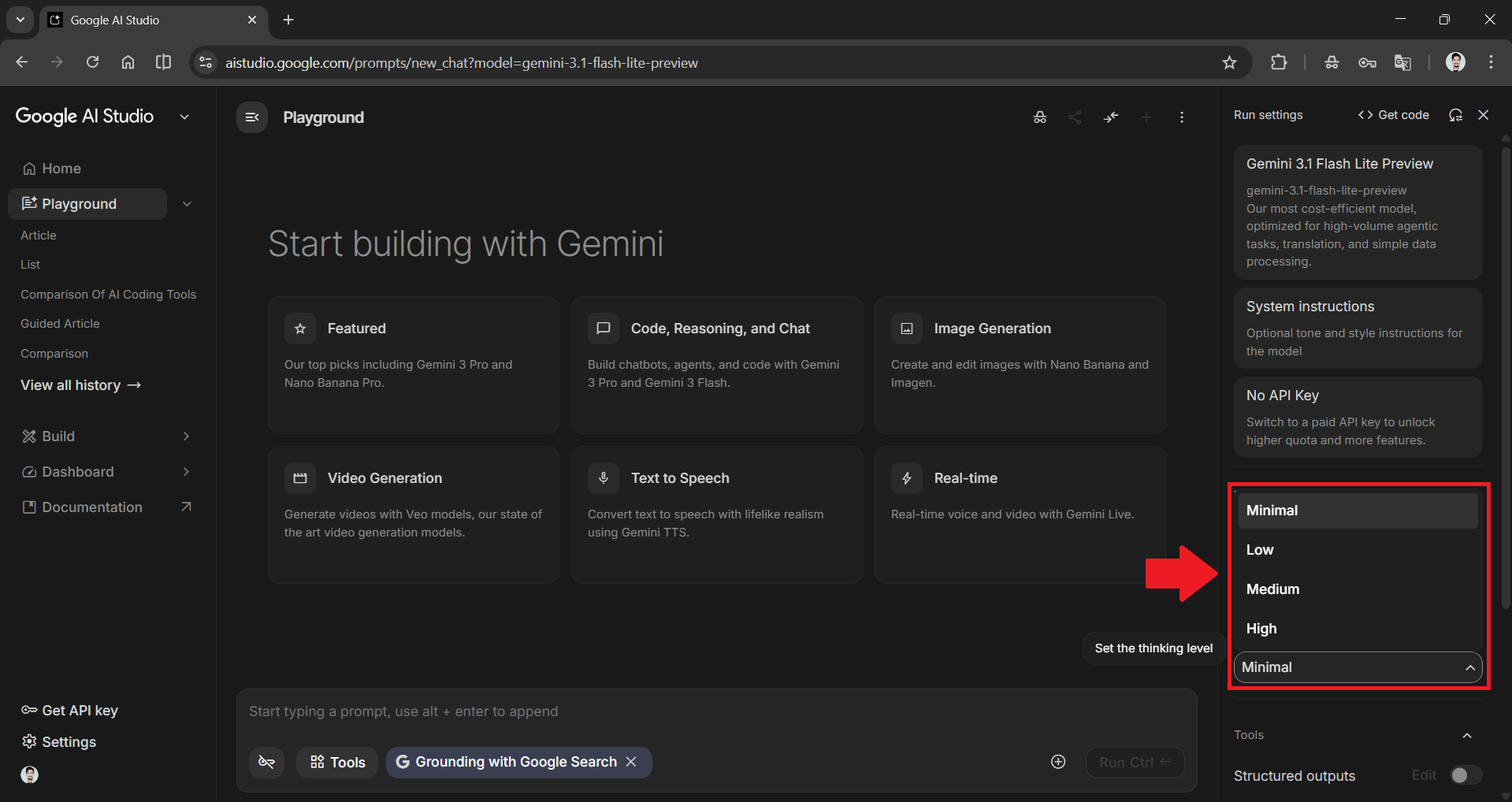

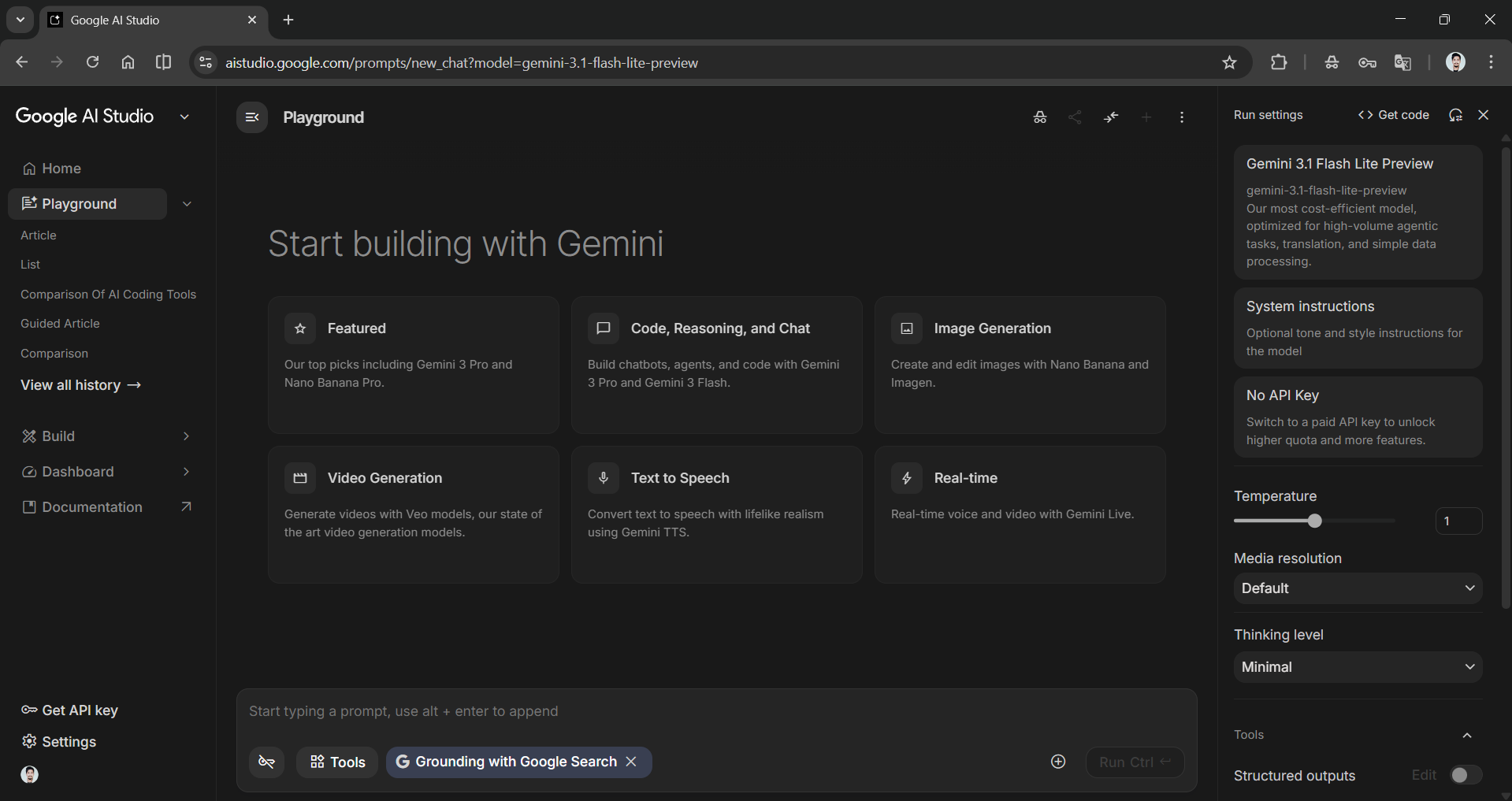

One genuinely distinctive feature is the inclusion of adjustable thinking levels, available in both Google AI Studio and Vertex AI. Developers can control how much reasoning the model applies per task:

- Minimal to low for high-volume, straightforward tasks like content moderation and translation.

- Medium to high reasoning for more complex outputs like generating user interfaces, dynamic dashboards, or multi-step agentic workflows.

Practical use cases confirmed by early-access developers include:

- High-volume content translation and moderation.

- Real-time wireframe, UI, and dynamic dashboard generation.

- Versatile multi-step SaaS agent execution.

- Rapid classification and sorting of large image datasets.

Companies are already using 3.1 Flash-Lite in production, with testers noting its ability to handle complex inputs with the precision of larger-tier models while maintaining instruction adherence at scale.

The model is rolling out in preview via the Gemini API in Google AI Studio and for enterprise users via Vertex AI.

Google Gemini 3.1 Flash-Lite in AI Studio and Vertex AI

AdCreative.ai: An AI-powered platform that automates the creation of high-performing ad creatives for social media and display campaigns.

GPT‑5.3 Instant by OpenAI:

OpenAI's release takes a different approach, one that may be less flashy on spec sheets but will be felt immediately by the millions of people who use ChatGPT daily. GPT‑5.3 Instant is an update to GPT‑5.2 Instant, the most-used model tier in the ChatGPT ecosystem, and its improvements center on three things: tone, relevance, and conversational flow.

The predecessor, GPT‑5.2 Instant, had a documented problem. It would sometimes refuse to answer questions it could reasonably answer, or preface responses with lengthy safety disclaimers and moralizing preambles that frustrated users, a style critics and users described as "cringe."

GPT‑5.3 Instant addresses GPT-5.2 Instant issues directly by:

- Significantly fewer unnecessary refusals: The model gives direct answers when it should, without lengthy preambles about what it won't do.

- Reduced preachy framing: OpenAI specifically called out phrases like "Stop. Take a breath." as the kind of overbearing language this update removes.

- Smarter web synthesis: Instead of returning long lists of links, the model now blends its own knowledge with web results to surface the most contextually relevant answer upfront.

- Measurable accuracy gains: On internal evaluations in higher-stakes domains (medicine, law, and finance), hallucination rates dropped 26.8% with web search enabled and 19.7% without it; user-flagged factual errors also declined.

The model is available immediately in ChatGPT and via the API under gpt-5.3-chat-latest. GPT‑5.2 Instant will remain available in the Legacy Models section for paid users until June 3rd, 2026, before being retired.

Conclusion: Who Should Use What?

These two models aren't really competing for the same users; they solve different categories of problems.

If you're a developer, data engineer, or enterprise architect building applications that process large volumes of inputs like document classification, real-time content pipelines, multimodal analysis, agentic UI generation, Gemini 3.1 Flash-Lite could be the right AI for your workflow.

If you're a knowledge worker, researcher, writer, student, or non-technical professional who uses ChatGPT as a daily thinking and productivity tool, then GPT‑5.3 Instant is the more relevant release for you. The improvements are real and practical, where you can expect fewer dead ends, less performative caution, better web-grounded answers, and lower hallucination.

These two AI models are not the biggest in their own respective series, and it is not about being the most powerful model. However, what matters is which fits more seamlessly into your actual work. Google Gemini 3.1 flash lite as developers focused on infrastructure model, or OpenAI's ChatGPT-3.1 instant as everyday users focused on generalist model.

💡 For Partnership/Promotion on AI Tools Club, please check out our partnership page.